In February 2020, the WHO Director-General Tedros Ghebreyesus said misinformation about COVID-19 is just as dangerous as the virus itself. “We are not just fighting an epidemic; we are fighting an ‘infodemic.’ Fake news spreads faster and more easily than the virus and is just as dangerous.”[i]

In this post, the fourth installment in Sustainalytics’ COVID-19 blog series, we focus on the proliferation of misinformation and fake news about the pandemic, and what media companies are doing about it.

The proliferation of misinformation

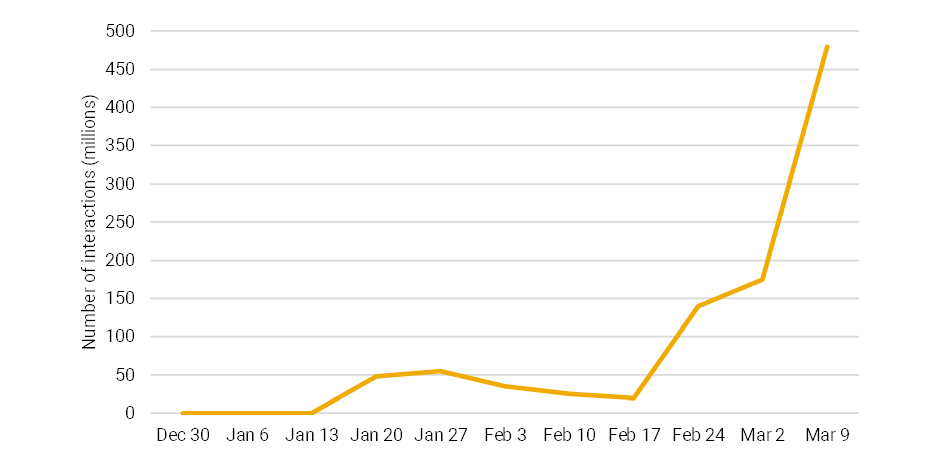

Unsurprisingly, data coming in from Google Trends and media aggregators indicates that COVID-19 related content has spiked in recent weeks. According to content aggregator NewsWhip, the number of COVID-19 interactions accounted for 48% of all content engagements in the month of March, reaching almost 500 million per week in early March, as shown below in Figure # 1.

This means that social media is currently rife with information – some of it accurate, some of it less so – about coronavirus. While the integrity of this information is doubted by many – data recently published by Statista suggests that 60 percent of US citizens do not trust social media to provide accurate information about COVID-19 – it has heightened what were already significant pressures on social media to define their editorial responsibilities.[ii]

FIGURE 1: NUMBER OF COVID-19 INTERACTIONS*

* Interactions include the combined impact from Facebook (shares, likes, comments), Pininterest Pins, and Twitter influencer shares

Source: NewsWhip[iii]

Broader ramifications

In addition to putting social media companies under the spotlight, the coronavirus pandemic may be the push the US government has needed to change Section 230 of the Communications Decency Act. Enacted in 1996, the law allows online platforms to moderate user’s content without being treated as publishers, limiting their liability for users’ posts.

This legislation has served as the backbone for the modern internet in that it allows for freedom of speech. There is one passage that is particularly relevant: “Intermediaries that host or republish speech are protected against a range of laws that would be used to hold them legally responsible for what others say and do.”[iv]

The US Department of Justice (DoJ) has been considering changes to require further moderation efforts from the tech giants. In February 2020, it hosted a workshop to examine the scope of the law, and panelists were vocally critical of how big tech has moderated content.[v]

Critics of the law cite that Section 230 has enabled platforms to absolve themselves of responsibility for policing their platforms, while allowing them to block or remove selective content with impunity.

As Ranking Digital Rights points out, social media companies already deploy content-shaping algorithms. These are systems that determine the content that each individual user sees online, including user-generated or organic posts and paid advertisements.[vi]

As social media companies engage much more actively in serving content to users through algorithms and other mechanisms, the line between passively hosting third-party speech and actively curating content is beginning to blur.

Big Tech’s response

Alphabet, Facebook and Twitter have each announced measures to stem the flow of COVID-19 misinformation.

Alphabet has prioritized search results linked to World Health Organization (WHO) resources, while Facebook has committed USD 100mn for local news teams to further support high-quality, accurate reporting.

Twitter has begun removing any content that denies expert guidance, encourages fake or ineffective treatments or falsely purports to be from experts or authorities.

A case in point is hydroxychloroquine, a promising but as yet unproven treatment for COVID-19. Twitter recently removed tweets by Rudy Giuliani (President Trump’s personal attorney) and Brazilian President Bolsonaro for breaching its COVID-19 misinformation rules by touting the drug as an effective measure.

Facebook also removed Instagram videos of Bolsonaro in which he promoted hydroxychloroquine as an effective treatment.[vii]

In addition to these measures, Alphabet, Facebook, Twitter and Microsoft have agreed to jointly combat fraud and misinformation related to COVID-19 across their platforms, a singularly unprecedented act of cooperation.

Sustainalytics’ assessment

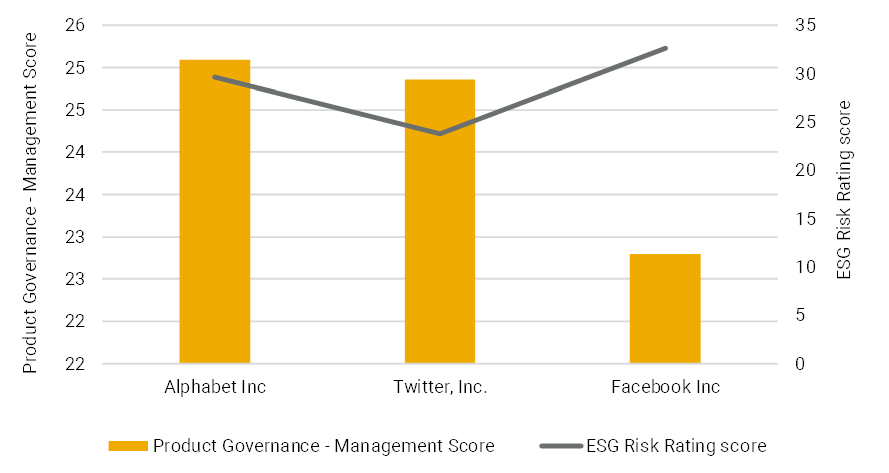

Despite these announcements, the social media giants mentioned above score poorly on Sustainalytics’ Product Governance material ESG issue (MEI), which encapsulates risks related to editorial guidelines, media ethics and content governance.

As shown in Figure 2, Twitter and Facebook have weak management scores on this MEI (i.e. scores < 25), while Alphabets’ sits on the cusp. A lack of transparency into content moderation systems is common throughout the three firms.

While these companies’ new policies focused on misinformation and fake news surrounding COVID-19 are promising, only time will tell if these social media monoliths maintain more rigorous internal content governance mechanisms, to augment their product governance systems and effectively moderate the content on their platforms.

Facebook, for example has developed a curated News offering, tweaked its algorithms to promote official accounts for its 2.4 billion users and removed false content about coronavirus in ways that, previously, the social networking giant said it never would.[viii]

Watch for lawmakers to push companies to keep these tactics on other hot-button content issues when the coronavirus pandemic eventually ebbs away.[ix]

FIGURE 2: COMPARING PRODUCT GOVERNANCE AND ESG RISK RATING SCORES

Source: Sustainalytics

Sources:

[i] https://www.who.int/dg/speeches/detail/munich-security-conference

[ii] https://www.statista.com/statistics/1104569/least-trusted-news-sources-coronavirus-us/

[iii] https://go.newswhip.com/2020_03_Covid-19_TY.html?aliId=eyJpIjoiQ2dtM0UzM0p1Z242TEhQRCIsInQiOiJpYW9cL0JpcGVYMXZyOWRZdmh1elFFdz09In0%253D

[iv] https://www.eff.org/issues/cda230

[v] https://www.theverge.com/2020/2/19/21144223/justice-department-section-230-debate-liability-doj

[vi] https://www.newamerica.org/oti/reports/its-not-just-content-its-business-model/

[vii] https://www.theverge.com/2020/3/30/21199845/twitter-tweets-brazil-venezuela-presidents-covid-19-coronavirus-jair-bolsonaro-maduro

[viii] https://www.politico.com/news/2020/03/12/social-media-giants-are-fighting-coronavirus-fake-news-its-still-spreading-like-wildfire-127038

[ix] https://www.politico.com/news/2020/03/12/social-media-giants-are-fighting-coronavirus-fake-news-its-still-spreading-like-wildfire-127038

Recent Content

SFDR 2.0: Navigating the Shift from Complexity to Clarity in Sustainable Investing

This article highlights the potential implications changes to SFDR 2.0 could create for asset managers and institutional investors as well as the growing role of high-quality, independent data in navigating this regulatory transition.